Blockchain is a distributed digital ledger technology in which blocks of transaction records can be added and viewed—but can’t be deleted or changed without detection. Here’s where the name comes from: a blockchain is an ever-growing sequential chain of transaction records, clumped together into blocks. There’s no central repository of the chain, which is replicated in each participant’s blockchain node, and that’s what makes the technology so powerful. Yes, blockchain was originally developed to underpin Bitcoin and is essential to the trust required for users to trade digital currencies, but that is only the beginning of its potential.

Blockchain is a distributed digital ledger technology in which blocks of transaction records can be added and viewed—but can’t be deleted or changed without detection. Here’s where the name comes from: a blockchain is an ever-growing sequential chain of transaction records, clumped together into blocks. There’s no central repository of the chain, which is replicated in each participant’s blockchain node, and that’s what makes the technology so powerful. Yes, blockchain was originally developed to underpin Bitcoin and is essential to the trust required for users to trade digital currencies, but that is only the beginning of its potential.

Blockchain neatly solves the problem of ensuring the validity of all kinds of digital records. What’s more, blockchain can be used for public transactions as well as for private business, inside a company or within an industry group. “Blockchain lets you conduct transactions securely without requiring an intermediary, and records are secure and immutable,” says Mark Rakhmilevich, product management director at Oracle. “It also can eliminate offline reconciliations that can take hours, days, or even weeks.”

That’s the power of blockchain: an immutable digital ledger for recording transactions. It can be used to power anonymous digital currencies—or farm-to-table vegetable tracking, business contracts, contractor licensing, real estate transfers, digital identity management, and financial transactions between companies or even within a single company.

“Blockchain doesn’t have to just be used for accounting ledgers,” says Rakhmilevich. “It can store any data, and you can use programmable smart contracts to evaluate and operate on this data. It provides nonrepudiation through digitally signed transactions, and the stored results are tamper proof. Because the ledger is replicated, there is no single source of failure, and no insider threat within a single organization can impact its integrity.”

It’s All About Distributed Ledgers

Several simple concepts underpin any blockchain system. The first is the block, which is a batch of one or more transactions, grouped together and hashed. The hashing process produces an error-checking and tamper-resistant code that will let anyone viewing the block see if it has been altered. The block also contains the hash of the previous block, which ties them together in a chain. The backward hashing makes it extremely difficult for anyone to modify a single block without detection.

A chain contains collections of blocks, which are stored on decentralized, distributed servers. The more the better, with every server containing the same set of blocks and the latest values of information, such as account balances. Multiple transactions are handled within a single block using an algorithm called a Merkle tree, or hash tree, which provides fault and fraud tolerance: if a server goes down, or if a block or chain is corrupted, the missing data can be reconstructed by polling other servers’ chains.

And while the chain itself should be open for validation by any participant, some chains can be implemented with some form of access control to limit viewing of specific data fields. That way, participants can view relevant data, but not everything in the chain. A customer might be able to verify that a contractor has a valid business license and see the firm’s registered address and list of complaints—but not see the names of other customers. The state licensing board, on the other hand, may be allowed to access the customer list or see which jobs are currently in progress.

When originally conceived, blockchain had a narrow set of protocols. They were designed to govern the creation of blocks, the grouping of hashes into the Merkle tree, the viewing of data encapsulated into the chain, and the validation that data has not been corrupted or tampered with. Over time, creators of blockchain applications (such as the many competing digital currencies) innovated and created their own protocols—which, due to their independent evolutionary processes, weren’t necessarily interoperable. By contrast, the success of general-purpose blockchain services, which might encompass computing services from many technology, government, and business players, created the need for industry standards—such as Hyperledger, a Linux Foundation project.

Read more in my feature article in Oracle Magazine, March/April 2018, “It’s All About Trust.”

Far too many companies fail to learn anything from security breaches. According to CyberArk, cyber-security inertia is putting organizations at risk. Nearly half — 46% — of enterprises say their security strategy rarely changes substantially, even after a cyberattack.

Far too many companies fail to learn anything from security breaches. According to CyberArk, cyber-security inertia is putting organizations at risk. Nearly half — 46% — of enterprises say their security strategy rarely changes substantially, even after a cyberattack.

DevOps is a technology discipline well-suited to cloud-native application development. When it only takes a few mouse clicks to create or manage cloud resources, why wouldn’t developers and IT operation teams work in sync to get new apps out the door and in front of user faster? The DevOps culture and tactics have done much to streamline everything from coding to software testing to application deployment.

DevOps is a technology discipline well-suited to cloud-native application development. When it only takes a few mouse clicks to create or manage cloud resources, why wouldn’t developers and IT operation teams work in sync to get new apps out the door and in front of user faster? The DevOps culture and tactics have done much to streamline everything from coding to software testing to application deployment.

When the little wireless speaker in your kitchen acts on your request to add chocolate milk to your shopping list, there’s artificial intelligence (AI) working in the cloud, to understand your speech, determine what you want to do, and carry out the instruction.

When the little wireless speaker in your kitchen acts on your request to add chocolate milk to your shopping list, there’s artificial intelligence (AI) working in the cloud, to understand your speech, determine what you want to do, and carry out the instruction.

The bad news: There are servers used in serverless computing. Real servers, with whirring fans and lots of blinking lights, installed in racks inside data centers inside the enterprise or up in the cloud.

The bad news: There are servers used in serverless computing. Real servers, with whirring fans and lots of blinking lights, installed in racks inside data centers inside the enterprise or up in the cloud. Those are two popular ways of migrating enterprise assets to the cloud:

Those are two popular ways of migrating enterprise assets to the cloud: To get the most benefit from the new world of cloud-native server applications, forget about the old way of writing software. In the old model, architects designed software. Programmers wrote the code, and testers tested it on test server. Once the testing was complete, the code was “thrown over the wall” to administrators, who installed the software on production servers, and who essentially owned the applications moving forward, only going back to the developers if problems occurred.

To get the most benefit from the new world of cloud-native server applications, forget about the old way of writing software. In the old model, architects designed software. Programmers wrote the code, and testers tested it on test server. Once the testing was complete, the code was “thrown over the wall” to administrators, who installed the software on production servers, and who essentially owned the applications moving forward, only going back to the developers if problems occurred. “One of these things is not like the others,” the television show Sesame Street taught generations of children. Easy. Let’s move to the next level: “One or more of these things may or may not be like the others, and those variances may or may not represent systems vulnerabilities, failed patches, configuration errors, compliance nightmares, or imminent hardware crashes.” That’s a lot harder than distinguishing cookies from brownies.

“One of these things is not like the others,” the television show Sesame Street taught generations of children. Easy. Let’s move to the next level: “One or more of these things may or may not be like the others, and those variances may or may not represent systems vulnerabilities, failed patches, configuration errors, compliance nightmares, or imminent hardware crashes.” That’s a lot harder than distinguishing cookies from brownies. IT managers shouldn’t have to choose between cloud-driven innovation and data-center-style computing. Developers shouldn’t have to choose between the latest DevOps programming using containers and microservices, and traditional architectures and methodologies. CIOs shouldn’t have to choose between a fully automated and fully managed cloud and a self-managed model using their own on-staff administrators.

IT managers shouldn’t have to choose between cloud-driven innovation and data-center-style computing. Developers shouldn’t have to choose between the latest DevOps programming using containers and microservices, and traditional architectures and methodologies. CIOs shouldn’t have to choose between a fully automated and fully managed cloud and a self-managed model using their own on-staff administrators. When was the last time most organizations discussed the security of their Oracle E-Business Suite? How about SAP S/4HANA? Microsoft Dynamics? IBM’s DB2? Discussions about on-prem server software security too often begin and end with ensuring that operating systems are at the latest level, and are current with patches.

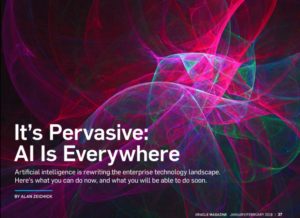

When was the last time most organizations discussed the security of their Oracle E-Business Suite? How about SAP S/4HANA? Microsoft Dynamics? IBM’s DB2? Discussions about on-prem server software security too often begin and end with ensuring that operating systems are at the latest level, and are current with patches. The water is rising up over your desktops, your servers, and your data center. Glug, glug, gurgle.

The water is rising up over your desktops, your servers, and your data center. Glug, glug, gurgle. A major global cyberattack could cost US$53 billion of economic losses. That’s on the scale of a catastrophic disaster like 2012’s

A major global cyberattack could cost US$53 billion of economic losses. That’s on the scale of a catastrophic disaster like 2012’s  I am unapologetically mocking this company’s name.

I am unapologetically mocking this company’s name.  An organization’s Chief Information Security Officer’s job isn’t ones and zeros. It’s not about unmasking cybercriminals. It’s about reducing risk for the organization, for enabling executives and line-of-business managers to innovate and compete safely and securely. While the CISO is often seen as the person who loves to say “No,” in reality, the CISO wants to say “Yes” — the job, after all, is to make the company thrive.

An organization’s Chief Information Security Officer’s job isn’t ones and zeros. It’s not about unmasking cybercriminals. It’s about reducing risk for the organization, for enabling executives and line-of-business managers to innovate and compete safely and securely. While the CISO is often seen as the person who loves to say “No,” in reality, the CISO wants to say “Yes” — the job, after all, is to make the company thrive.

Cybercriminals want your credentials and your employees’ credentials. When those hackers succeed in stealing that information, it can be bad for individuals – and even worse for corporations and other organizations. This is a scourge that’s bad, and it will remain bad.

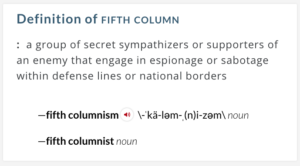

Cybercriminals want your credentials and your employees’ credentials. When those hackers succeed in stealing that information, it can be bad for individuals – and even worse for corporations and other organizations. This is a scourge that’s bad, and it will remain bad. I can’t trust the Internet of Things. Neither can you. There are too many players and too many suppliers of the technology that can introduce vulnerabilities in our homes, our networks – or elsewhere. It’s dangerous, my friends. Quite dangerous. In fact, it can be thought of as a sort of Fifth Column, but not in the way many of us expected.

I can’t trust the Internet of Things. Neither can you. There are too many players and too many suppliers of the technology that can introduce vulnerabilities in our homes, our networks – or elsewhere. It’s dangerous, my friends. Quite dangerous. In fact, it can be thought of as a sort of Fifth Column, but not in the way many of us expected. You keep reading the same three names over and over again. Amazon Web Services. Google Cloud Platform. Microsoft Windows Azure. For the past several years, that’s been the top tier, with a wide gap between them and everyone else. Well, there’s a fourth player, the IBM cloud, with their SoftLayer acquisition. But still, it’s AWS in the lead when it comes to Infrastructure-as-a-Service (IaaS) and Platform-as-a-Service (PaaS), with many estimates showing about a 37-40% market share in early 2017. In second place, Azure, at around 28-31%. Third, place, Google at around 16-18%. Fourth place, IBM SoftLayer, at 3-5%.

You keep reading the same three names over and over again. Amazon Web Services. Google Cloud Platform. Microsoft Windows Azure. For the past several years, that’s been the top tier, with a wide gap between them and everyone else. Well, there’s a fourth player, the IBM cloud, with their SoftLayer acquisition. But still, it’s AWS in the lead when it comes to Infrastructure-as-a-Service (IaaS) and Platform-as-a-Service (PaaS), with many estimates showing about a 37-40% market share in early 2017. In second place, Azure, at around 28-31%. Third, place, Google at around 16-18%. Fourth place, IBM SoftLayer, at 3-5%. Cloud-based firewalls come in two delicious flavors: vanilla and strawberry. Both flavors are software that checks incoming and outgoing packets to filter against access policies and block malicious traffic. Yet they are also quite different. Think of them as two essential network security tools: Both are designed to protect you, your network, and your real and virtual assets, but in different contexts.

Cloud-based firewalls come in two delicious flavors: vanilla and strawberry. Both flavors are software that checks incoming and outgoing packets to filter against access policies and block malicious traffic. Yet they are also quite different. Think of them as two essential network security tools: Both are designed to protect you, your network, and your real and virtual assets, but in different contexts. Want to open up your eyes, expand your horizons, and learn from really smart people? Attend a conference or trade show. Get out there. Meet people. Have conversations. Network. Be inspired by keynotes. Take notes in classes that are delivering great material, and walk out of boring sessions and find something better.

Want to open up your eyes, expand your horizons, and learn from really smart people? Attend a conference or trade show. Get out there. Meet people. Have conversations. Network. Be inspired by keynotes. Take notes in classes that are delivering great material, and walk out of boring sessions and find something better.